HOPE not hate uses cookies to collect information and give you a more personalised experience on our site. You can find more information in our privacy policy. To agree to this, please click accept.

Twitter has launched a “purge” of its platform, shutting down accounts based on new rules against abuse and threats of violence.

The notorious leaders of Britain First, Jayda Fransen and Paul Golding, along with the English Defence League and Europe’s far-right Generation Identity movement, have already had their Twitter accounts permanently suspended.

Other infamous white nationalist and white supremacist figures in the USA such as Jared Taylor have also had their accounts taken down. League of the South’s Hunter Wallace (@occdissent), and the Traditionalist Workers Party (@tradworker) are also banned.

Other purged accounts include Neo-Nazi leader Jeff Schoep (@nsm88) and Vanguard America (@vanamofficial), the group alleged murderer James Alex Fields stood with in Charlottesville. The American Nazi Party’s account (@anp14) is gone, too.

Twitter announced the changes to its policies on hateful speech in November.

Under the new rules enforced today, users are no longer be able to use “hateful images or symbols” in profile images or headers and “may not affiliate with organizations that — whether by their own statements or activity both on and off the platform — use or promote violence against civilians to further their causes”.

We’ve updated our rules around abuse and hateful conduct as well as violence and physical harm. These changes will be enforced starting December 18. Read our updated rules here: https://t.co/NGVT3qGFvg

— Twitter Safety (@TwitterSafety) 17 November 2017

The company has touted the guidelines as simply a clarification of existing rules but appear to acknowledge the vast amounts of hate, white supremacist messages and swastika-toting Pepes appearing on the platform.

Ever since Twitter announced the changes in its guidelines, activists and NGOs campaigning against online hate have been cautiously optimistic that the social media platform was taking concrete steps against rhetoric that encourages hate and violence.

Those who had previously raised concerns over allowing members of the far right to use Twitter to spread hate-filled rhetoric also welcomed the change.

Bravo @twitter for taking down the vile Britain First, Jayda Fransen and Paul Golding accounts.

Thank you, thank you !#TwitterPurge #ResistingHate @hopenothate @Far_Right_Watch @ResistingHate @matthaig1 @JamesMelville @labourlewis @sturdyAlex @AbiWilks @MrsNickyClark pic.twitter.com/cyUcikPUXH— Helen Cherry (@hellenjc1954) 18 December 2017

However, those on the Right have been very critical, encouraging supporters to migrate to alternative platforms such as Gab, which is uncensored, and claiming that Silicon valley is determined to silence opposing voices.

Andrew Torba, founder of Gab, has been critical of Twitter’s decision to monitor users offline.

“This is a scary precedent to set,” he wrote in an email to Mashable. “Rules like this will only force dissidents and those who are speaking truth to power to silence themselves or risk being silenced by Twitter.”

However, Joe Mulhall, senior researcher for HOPE not hate, said:

“This is not an issue of free speech. Twitter is privately owned and has the right choose to deny neo-Nazis use of its platform. They are free to spew hate elsewhere.”

Gab is only a fraction of Twitter’s size – 300,000 compared to more than 300 million – and is already home to a large number of far-right accounts. Many users who gained a huge following supporting Trump during his presidential campaign moved over to Gab when they were later banned from Twitter.

“Nearly everyone on it seems to embrace some type of right wing ideology—meaning there are very few liberals around for trolls to target with hate speech. Trump isn’t on it, so his fans rely on an account that reposts what the president writes on Twitter as an alternative… Nevertheless, Gab is where some worried Trump supporters expect to wind up after Monday,” according to Newsweek.

Brad Griffin, the self-proclaimed Southern nationalist behind the “White Lives Matter” rally in Tennessee this October, wrote on his @occdissent Twitter account: “In light of the upcoming Twitter purge, follow me [on Gab].”

Some neo-Nazi accounts have been calling 18 December the ‘great shoah’ing,’, playing on the Hebrew word for Holocaust. Others have been using memes as a countdown until their accounts are removed.

“We are aware these banned individuals will not end their activities and are likely to migrate to less monitored platforms like Gab,” says Mulhall. “While their ability to recruit supporters, propagate hate and impact the wider society will be severely limited, these platforms will allow them to better organise themselves.”

Twitter had already taken some half-measure actions against some high-profile white nationalist and supremacist accounts last month, stripping verified status from alt-right figurehead Richard Spencer, and Jason Kessler, the organiser of the Charlottesville ‘Unite The Right’ rally, among others.

Twitter’s latest measure echoes moves by other social media platforms to tackle online hate.

The large social media companies have had an increasingly bad year in terms of PR as criticism over white supremacists and neo-Nazis on their platform have increased.

Hundreds of major brands have pulled advertising from YouTube, owned by Google, after revelations that their ads were being placed next to extremist or hateful content.

Facebook was one of several social media giants to increase the number of its moderators and earlier this month, Google announced it was hiring 10,000 moderators for Youtube. The company has been criticised for failing to safeguard children from extremist content.

HOPE not hate has also combatted the rhetoric of far-right figures online through several avenues.

In 2017 we put pressure on payment platform Patreon to ban Lauren Southern, a far-right political activist, from the platform. We also showed how anti-Muslim activists exploit terror attacks and amplify their messages with ‘bot armies’.

Thanks to a HOPE not hate exposé, we revealed how a Breitbart writer was running a secret right-wing “free speech” Facebook group, the Young Right Society (YRS), frequently awash with appalling racist and ‘alt-right’ content.

The impact users can have on social media platforms has been proven through the 2016 US election and Brexit in the UK. Russia-linked Twitter accounts were even used to “extend the impact and harm” of four 2017 terrorist attacks in the UK, according to a study at Cardiff University.

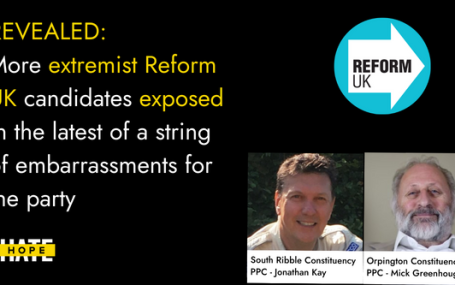

HOPE not hate reveals two more extremist candidates from Reform UK, in the latest of a string of embarrassments for the party UPDATE: Just hours…