HOPE not hate uses cookies to collect information and give you a more personalised experience on our site. You can find more information in our privacy policy. To agree to this, please click accept.

Over recent months HOPE not hate has watched with increasing alarm as violent, racist and harmful videos are posted on social media platforms using the video-sharing platform BitChute as their host – circumventing the ban that most major platforms have placed on the sharing of this content.

Bitchute is a UK-based video-sharing platform – founded by tech entrepreneur Ray Vahey in 2017 – whose stated purpose was to offer a platform for content that had been banned from other sites.

Vahey said that his idea for the site came from:

“seeing the increased levels of censorship by the large social media platforms in the last couple of years. Bannings, demonetization, and tweaking algorithms to send certain content into obscurity and wanting to do something about it.”

With this as a core mission, it comes as little surprise that the site has attracted a vast array of vile – and often illegal – content.

This report shows:

This cannot be allowed to go on. Change is needed:

BitChute’s behaviour is a particular challenge for social media platforms where BitChute content is shared. As a result of our research Facebook have deleted swathes of links to BitChute posted on their own platform.

Erin Saltman, EMEA Head of Counterterrorism and Dangerous Organisations Policy for Facebook, told us: “Hate speech, violent extremist groups and dangerous organisations have no place on our platforms and we are grateful to HOPE Not Hate for sharing their research around new methods used to promote this content online. We have taken firm action based on the findings of this report to ensure that hate-based organisations are not our platform, removing and blocking the vast majority of content shared with us. We will continue to work with partners and experts to stay ahead of those seeking to spread hate and violent extremist content.”

This is a welcome move. But more is needed to reduce the harm done by BitChute’s behaviour. We’ll be pressing for social media companies, the police, and the Government to take action.

Read the full report now.

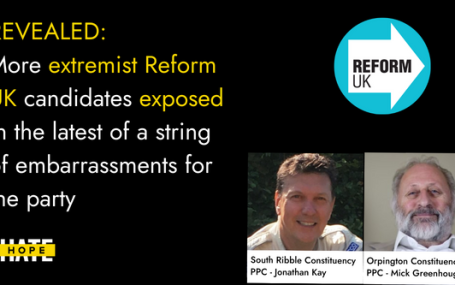

HOPE not hate reveals two more extremist candidates from Reform UK, in the latest of a string of embarrassments for the party UPDATE: Just hours…