HOPE not hate uses cookies to collect information and give you a more personalised experience on our site. You can find more information in our privacy policy. To agree to this, please click accept.

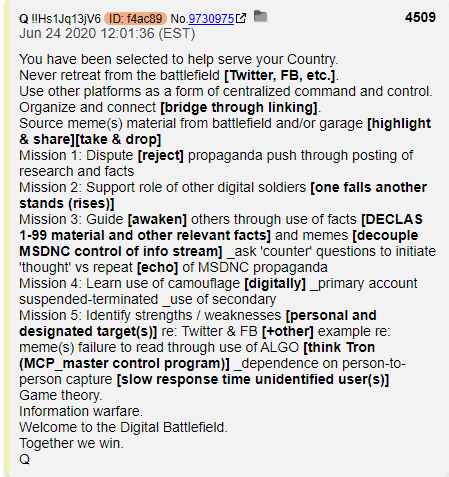

To perhaps a greater degree than any comparable movement, QAnon is a product of the social media era. Aside from the occasional QAnon placards that…

To perhaps a greater degree than any comparable movement, QAnon is a product of the social media era. Aside from the occasional QAnon placards that could be seen at Donald Trump rallies and the emergence in late Summer 2020 of anti-lockdown and #SaveOurChildren street protests, this ideological movement could rarely be seen outside of its home on social media platforms and web forums.

It is unlikely that it could have sprung into life anywhere else. Without the anonymity provided by the 4Chan and 8Chan bulletin boards, Q could not have kept up the charade of their assumed identity, and nor could they have found a more receptive audience than the users of those platforms. Host to a legion of bored, alienated and predominantly far-right users, the /pol/ forum of 4chan was almost uniquely suited to host the birth of a movement that combined conspiracy theory, a promise of violent retribution against a liberal elite and, importantly, the encouragement of the audience to participate by conducting “research” of their own. This element of gamification has remained of central importance to the movement even as it spread through other social media platforms, but was particularly suited to the “anons” of 4chan, notorious for their collective endeavours in promulgating hoaxes and targeted harassment.

Q’s reach would have remained fringe, however, if it was limited to 4chan and 8chan. It was the movement’s spread onto the mainstream social media platforms – and from there onto the streets – that made this phenomenon into a global concern, one that could do long term damage to the US political environment and an unknown potential for similar harm around the world.

Even prior to the explosion of interest in conspiracy theories as the pandemic struck, QAnon had become a visible and viral presence online. Prominent promoters of the theory had gathered hundreds of thousands of followers on Twitter and YouTube, while QAnon Facebook groups had grown to tens of thousands of members.

Over the summer of 2020, both Twitter and Facebook finally began to crack down on the QAnon groups, pages and accounts that had proliferated on their platforms for almost three years. In July, Twitter announced that it had removed 7,000 accounts and “limited” 150,000 others, reducing their reach and limiting their ability to gain new followers. Facebook and Instagram followed in August, removing 900 QAnon groups and pages and limiting more than 10,000 accounts, though restricting the move to those that explicitly promoted violence.

This appeared to have had a positive impact by a number of measures. Traffic to QMap.pub, the most popular site to access Q’s posts, fell by almost a quarter. QAnon slogans were prevented from appearing in the trending sections of all three platforms, and although many of those suspended returned to set up new accounts, their reach was severely limited by the loss of their sometimes huge follower counts.

In October,

both Facebook and YouTube went further still, announcing that they would

attempt to remove QAnon’s presence from both their platforms entirely. This was

followed by what appears to be swift and decisive action; the BBC’s Shayan

Sardarizadeh reported that 80% of the Facebook groups and pages he had been

monitoring were deleted in the 24 hours after the announcement, while many of

the largest QAnon channels on YouTube disappeared within hours of their own

announcement on October 15.

It remains to be seen whether Twitter and other platforms will follow up with

similarly decisive measures, and whether any will have sufficient resolve to

prevent the movement from simply reappearing under new guises. Whatever happens

next, any actions taken now cannot begin to reverse the damage that three years

of largely unchecked proliferation on social media platforms has done.

Research by the Institute for Strategic Dialogue (ISD) found that global membership of QAnon Facebook groups soared by 120% in March 2020, a growth rate that might have been even more pronounced in the UK. Prior to March, HOPE not hate had identified just three UK-specific Facebook groups devoted to QAnon, with a combined membership of less than 5,000. By mid-May, this number had grown to ten or more, with a fast-growing membership of over 20,000. However, groups devoted specifically to the promotion of QAnon represent only a minor element of its rise.

In March and April this year, the creep of QAnon messaging into ostensibly unrelated groups and movements became starkly apparent to those studying the broader conspiracy theory milieu. In the UK, the anti-5G movement had been around for years, but suddenly leapt into public consciousness as people began to associate the rollout of the technology with the pandemic. But as anti-5G groups began to add tens of thousands of members, they became a melting pot of alternative conspiracy theories, with QAnon playing an ever-increasing role.

This is, again, a feature of how social media is structured. In 2019, Facebook began to pivot from their previous prioritisation of the public news feed towards encouraging the growth of private groups. Citing a growing desire for private and segmented conversations over the default of sharing everything with one’s wider friends list, the move had the effect of shifting dialogue into largely self-governing spaces, with content moderation left in the hands of the group’s founder and their self-selected team of moderators. While individual members are able to report content to Facebook’s own moderators if they wish, this still relies on a self-selected group to both identify harmful content and be motivated to push for its removal.

QAnon content can become widespread even if group moderators are opposed to the movement. There is no minimum number of moderators required for a group; a group with a hundred thousand members and thousands of posts per day can have just one or two admins, giving them little ability to oversee the content posted even if they wished to do so. Groups that are set up for a particular purpose are at perpetual risk of being derailed by constant off-topic posting, and so the extent to which a group serves the purpose it was created for depends entirely on whether the group’s founder is motivated to keep it on track by reviewing content and recruiting a sufficient number of moderators who will take their responsibilities seriously.

For conspiracy theory groups, this dynamic has predictably meant that a group set up to promote one particular theory, whether it be Flat Earth, anti-vaccines or UFOs, often become a free-for-all of alternative ideas, sometimes more toxic and harmful than the group’s titular purpose. These groups can then operate as a gateway from less extreme conspiracy theories towards grand superconspiracies, as group members seek a wider explanation for the particular conspiracy that the group details.

These groups have proven to be fertile ground for QAnon in part because it provides an apparent motive for smaller conspiracies. The logical progression for anyone who comes to believe that 5G internet radiation is lethal, for example, is to question why our telecom companies, healthcare providers and governments would be conspiring to inflict such harm and conceal the evidence of it. QAnon’s assertion that global governments are controlled by a cabal of Satan-worshipping paedophiles seems outlandish to most, but less so to someone who has spent weeks or months being bombarded by posts about a less macabre but still deeply troubling sub-theory.

Similar processes take place in anti-vaccine groups, such as “Collective Action Against Bill Gates. We Won’t Be Vaccinated!!”, a group set up by British Facebook users in mid-April 2020 and which gathered almost 190,000 members until its deletion by Facebook in late September. QAnon posts became a regular feature of the group, due to the QAnon community’s near-unanimous COVID-denial, and because the Satanic cabal narrative provided a more detailed motive for the supposed wickedness of Gates and other pro-vaccination campaigners.

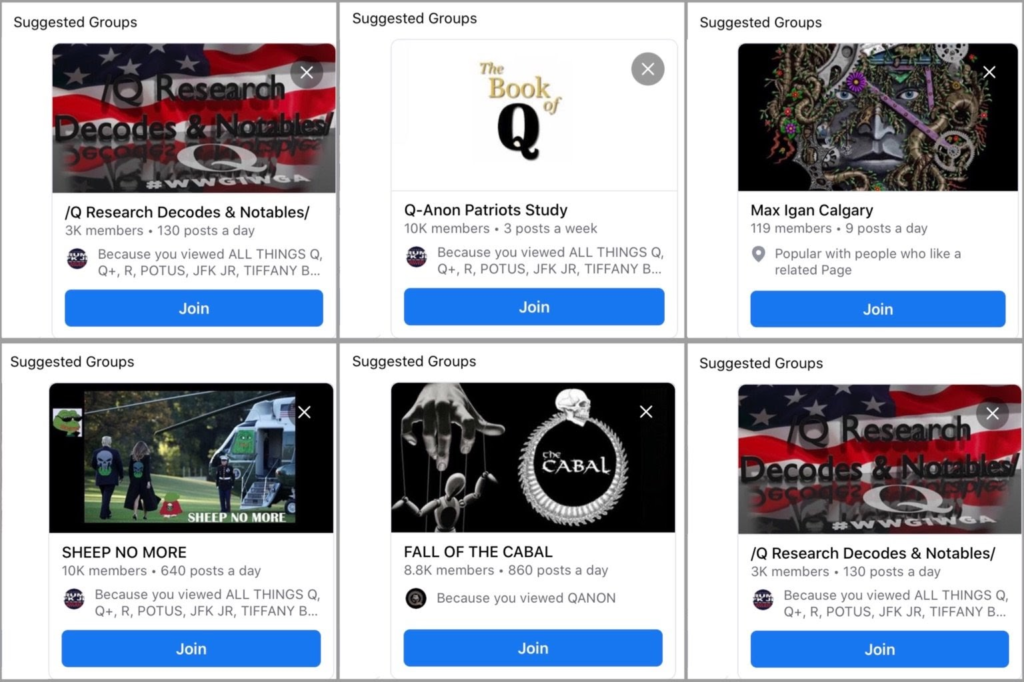

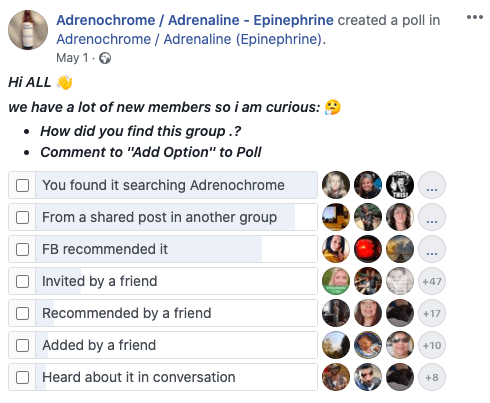

Other aspects of Facebook’s structure have also helped to boost QAnon to its current strength. Facebook has long used recommendations to promote pages and groups to its users, its algorithms generating suggestions based on the social media activity of both the user and their friendship group. While this feature might be beneficial when recommendations are based on hobbies or professional interests, it can become dangerous when it comes to the worlds of divisive politics, disinformation and conspiracy theory.

QAnon and other conspiracy theory groups promoted by Facebook

These recommendations appear to have a significant impact on the growth of conspiracy theory groups. In the poll from a QAnon group (pictured), over a quarter of the 950 respondents claimed to have joined the group as a result of a recommendation by Facebook. The group in question, called “Adrenochrome / Adrenaline – Epinephrine”, was devoted to the QAnon/Pizzagate sub-theory that claims that a vast network of celebrities and politicians are trafficking, torturing and murdering thousands of children in order to harvest their adrenal glands for use as an elixir of life and recreational drug. That Facebook’s algorithms were directing its users towards this particularly ghoulish element of QAnon lore is a vivid example of the harms that such recommendations can do.

Not only do such recommendations appear to be a major route by which people

join these groups, but they might also draw in those on the fringes of the

conspiracy theory world. A Facebook user whose friend shares a post from a

QAnon group might be tempted to click through to the group from mere curiosity;

from that moment onward, the algorithm will have registered their interest in

the topic and present them with groups and pages that reaffirm those

narratives. Facebook’s decision to remove all QAnon content will, if followed

through on properly, solve this issue in this specific case. But it is vital

that all platforms address the wider issue of algorithmic recommendations,

which will continue to aid the development and spread of harmful ideologies

unless radically overhauled.

Prior to the pandemic, the role of Twitter and its legion of QAnon influencers was perhaps even more influential on the movement than Facebook and Instagram. Twitter’s removal of 7,000 QAnon accounts in July suspended many of its most prominent cheerleaders. But as with Facebook’s actions in August, there were some very prominent and inexplicable omissions. The largest account devoted solely to promoting QAnon, PrayingMedic, remains on the platform and continues to preach his divisive message to his 400,000+ followers, as well as directing them to his YouTube channel. Many other promoters with hundreds of thousands of followers have similarly remained untouched, and it is unclear what criteria Twitter used to identify accounts for removal.

The appeal of Twitter to QAnon is similar to that for any aspiring movement or ideology; it allows an unparallelled reach and the ability to see and be seen by people well outside your own networks and echo chambers. It is also President Trump’s preferred medium, and allows them to support his messaging and berate his opponents in a much more public and accessible way than other platforms. Trump has himself assisted the growth of QAnon on Twitter by his over 200 retweets of its proponents, including tweets that contain QAnon slogans and hashtags.

The use of hashtags has also proven invaluable to QAnon. The movement’s instantly recognisable slogans, such as #WWG1WGA and #QSentMe, allowed supporters to connect with one another and also boost the movement’s wider visibility. Moreover, utilising unrelated hashtags created for specific news events or conversations enable tweets to gain visibility among users who do not follow your account; events such as the death of Jeffrey Epstein, the killing of George Floyd and the COVID-19 pandemic allowed for QAnon supporters to place their narratives into the consciousness of the wider population, by joining those conversations through related hashtags.

Twitter’s importance to QAnon is illustrated by the response of its key influencers to the wave of bans they’ve faced. As part of our efforts to help social media companies to remove the toxic influence of QAnon from their platforms, HOPE not hate researchers have reported 54 separate QAnon Twitter accounts that were evading a previous ban from the platform, with a combined following of 883,081. Many of these users create new accounts as soon as their old one is suspended; we have been responsible for the removal of six separate accounts from a single user, who goes by the nickname InevitableET. While such activity highlights the difficulties of permanently removing users, it still causes significant disruption to their efforts: InevitableET’s original account had 280,000 followers, while none of his recent accounts have managed to top 15,000 before their removal.

YouTube has played an

essential role in the dissemination of QAnon narratives since the very earliest

days – in fact, YouTube is the gateway by which it first spread into the

mainstream. Just one week after the first 4chan posts by Q, a YouTuber named

Tracy Diaz, who had previously covered the related Pizzagate theory

extensively, produced a video summarising the emerging narrative from Q,

bringing it to the attention of the wider conspiracy theorist community for the

first time.

A huge community of QAnon interpreters emerged on YouTube over the following

years, developing vast audiences for videos in which they dissect Q’s posts and

analyse the news cycle through the lens of QAnon. Some, like Diaz, had

preexisting channels that pushed hyperpartisan right-wing conspiracy theories,

but soon accrued vast audiences after adopting QAnon. The X22 Report channel,

for example, had been producing doom-laden videos predicting imminent economic

and societal collapse for over four years prior to the arrival of QAnon in

October 2017, and had amassed 145,000 followers in that time. By the summer of

2017, his channel was already preoccupied by the supposed “deep state” plot

against Donald Trump, and in many ways illustrated the cultural milieu from

which Q would later emerge. But this number would then rocket to over 944,000

over the next three years as the channel owner hitched his wagon to the QAnon

conspiracy theory.

Analysis by ISD found that 20% of Facebook posts promoting QAnon contained links to YouTube videos, illustrating the importance of video content to the movement. This importance was illustrated most strikingly by the emergence in 2020 of a spate of documentaries that promoted aspects of the QAnon narrative, such as Fall of the Cabal, Out of Shadows and Plandemic. These videos received millions of views on YouTube, among other platforms, and the high production value of the latter two gave the subject matter a veneer of professionalism that individual bloggers and video producers could not provide.

Following the announcement that YouTube would remove “conspiracy theory content used to justify real-world violence”, many of the most prominent QAnon channels were removed, including the X22 Report, PrayingMedic and others mentioned in this report. Many of those users had already set up backup channels on largely unmoderated video platforms such as BitChute, the owner of which has expressed support for conspiracy theories and welcomed its proponents onto their platform. Yet none of the alt-tech sites can begin to match the audience sizes that YouTube can offer, and as such this move represents a significant blow to both the channel owners and the movement as a whole.

It remains to

be seen whether other platforms will decide to follow Facebook in attempting to

remove QAnon from their platforms entirely, and whether they will dedicate

sufficient time and resources to implementing their decisions. The audience

size of the major platforms is sufficient enticement to guarantee that QAnon

promoters will never stop trying to regain access; any action against them will

therefore require endless vigilance against rebranded replacements repopulating

the platforms.

But it is equally important that social media companies conduct a detailed interrogation

of how their platforms were used and abused to create this phenomenon in the

first place. Each company has been criticised in the past for their failure to

tackle extremist ideologies such as white supremacism and Islamic extremism,

but one could argue that their platforms were merely reflecting wider societal

phenomena. In QAnon, they have been responsible for allowing a new movement to

be born and raised on their platforms; one that could have been prevented from

reaching even a tiny fraction of its current size if they had acted sooner.

Social media companies must learn the lessons from this disaster and apply them

consistently going forward.

Right-wing comic Kearse saves the worst material for his anonymous Telegram account HOPE not hate has identified an anonymised Telegram account belonging to the GB…