HOPE not hate uses cookies to collect information and give you a more personalised experience on our site. You can find more information in our privacy policy. To agree to this, please click accept.

Be it Covid-19 conspiracy theories shared in WhatsApp groups, campaigns of harassment by Twitter trolls, or the proliferation of far-right propaganda on YouTube, there is no doubt that harms perpetrated by extremists within the online world remain a pressing issue. HOPE not hate’s research involves monitoring how extremists harm others online, and we are under no illusion as to the scale or breadth of the threat.

Today, major platforms like Facebook and Twitter are used by extremists for recruitment, propagandising at scale, disruption of mainstream debate, and the harassment of victims. Smaller platforms which have been co-opted, like Twitch and Discord, allow for further radicalisation and organising, as they are unable (or unwilling) to tackle extremists’ abuse of their sites. Some platforms are even bespoke, structured to benefit those promoting extremism, as HOPE not hate’s recent report into the video-sharing platform Bitchute highlighted.

In response to this environment, civil society organisations are doing vital work pressuring tech companies to take greater action against these issues. However, it is increasingly clear that these dangers also require a deeper, regulatory solution.

The move to introduce internet regulation of this nature is a huge step. As Alex Krasodomski-Jones of Demos has rightly argued, “It is barely an oversimplification to characterise the current debate on internet regulation as a fight over the things people see, and the things they don’t.”

To this end, the government’s Digital Charter initiative, and the work that stems from it, is a welcome move. The Bill, if it resembles the white paper that preceded it, will aim to tackle harmful content and behaviours online in their entirety, from those that fall within HOPE not hate’s remit, such as terrorist and hate content and activity, to issues as varied as child sexual exploitation and abuse, modern slavery, the sale of weapons and advocacy of self-harm.

With such a breadth, there are concerns that the scope of the Bill will be too broad to be manageable, or that it will not be sufficiently detailed and could lead to hasty and flawed legislation. These are important considerations but they do not preclude working out how particular areas of online harms could be tackled. The nature of how online harms manifest in the digital realm through the far right’s actions is one such complex area, and we have to ensure that the government’s policy – in whatever form it takes – recognises and understands this.

Though aiming to remedy genuine harms, government regulation of our online lives also raises legitimate concerns over privacy and freedom of expression. We must address online harms whilst ensuring harms are not also inflicted through unfairly infringing on people’s freedoms. HOPE not hate recognises the importance of this balancing act, and encourages a form of regulation of platforms that places democratic rights front-and-centre.

In a world increasingly infused with the web, the significance of this legislation cannot be overstated and it is undoubtedly the case that getting it right will take rigorous reflection.

To that end, we encourage debate of the recommendations proposed in this report.

Finally, this is not just an opportunity to reduce the negative impacts of hostile and prejudiced online behaviour but also a chance to engage in a society-wide discussion about the sort of internet we do want. It is not enough to merely find ways to ban or suppress negative behaviour, we have to find a way to encourage and support positive online cultures.

As an organisation that attempts to understand and respond to the extremist political landscape in the UK, we are well aware of the importance of online activity to extremists today. Though we campaign against all manner of extremisms, HOPE not hate’s expertise and focus lies in tackling the organised far right.

As such this report and our recommendations are particularly attuned to how legislation could undermine the online harms propagated by these actors.

At the same time, we recognise that far-right extremism does not exist in a vacuum and instead emerges from (and feeds back into) wider societal prejudices and inequalities. The activists and groups we campaign against target specific cohorts who are systemically on the receiving end of these prejudices and inequalities, especially women, members of ethnic minority groups, religious minority groups, and LGBTQ+ communities.

To the extent that they can, these wider, systemic issues must be addressed in this legislation alongside efforts to curb extremism, and we encourage the government to listen to civil society recommendations on addressing these wider, societal factors online. In the UK, brilliant work is being done on this by groups including Glitch, the Antisemitism Policy Trust, Galop, The Fawcett Society, Leonard Cheshire and many others.

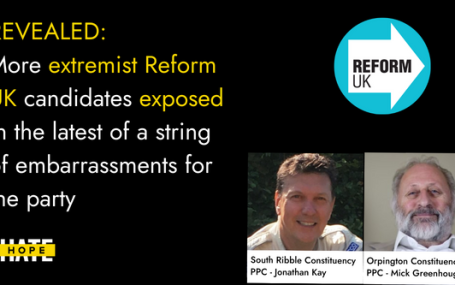

HOPE not hate reveals two more extremist candidates from Reform UK, in the latest of a string of embarrassments for the party UPDATE: Just hours…