HOPE not hate uses cookies to collect information and give you a more personalised experience on our site. You can find more information in our privacy policy. To agree to this, please click accept.

In the wake of the spike in racist abuse on social media that followed England’s loss in the final of the Euro 2020 tournament and the tragic mass shooting in Plymouth there has been a renewed debate about the failings of tech companies and the role of legislation in dealing with the problem of harmful content online. This has come in the midst of the ongoing debates surrounding the forthcoming Online Safety Bill that has been released in draft form and will soon face pre-legislative scrutiny.

For years, civil society organisations have done vital work pressuring tech companies to take greater action to reduce harm online. However, it has long been clear that these dangers also require a regulatory solution. The move to introduce internet regulation of this nature is a huge step.

One of the key areas of debate at present is around the draft legislation’s inclusion of so-called ‘legal but harmful’ content.

Some perceive the inclusion of legal content in this bill as an untenable threat to freedom of expression. Regulation of our online lives certainly raises legitimate concerns over privacy and freedom of expression. However, as with the offline world, there is a balance of rights to be struck. Government must tackle online harms without infringing on people’s freedoms. It must also preserve freedom from abuse and harassment, and protect vulnerable groups who are targets of online hate. We must end up with a form of regulation of platforms that places democratic rights front-and-centre.

Much of the criticism of the inclusion of legal but harmful content within the bill focuses solely on what harmful speech might be removed by this legislation and ignores the plethora of voices that are already suppressed online due to the often harmful and toxic online environment. If done properly, the inclusion of legal but harmful content within the scope of this legislation could dramatically increase the ability for a wider range of people to exercise their free speech online by increasing the plurality of voices on platforms, especially from minority and persecuted communities. If we are genuinely committed to promoting democratic debate online, preserving the status quo and continuing to exclude these voices is not an option.

This is not just an opportunity to reduce the negative impacts of hostile and prejudiced online behaviour but also a chance to engage in a society-wide discussion about the sort of internet we do want. It is not enough to merely find ways to ban or suppress negative behaviour, we have to find a way to encourage and support positive online cultures. The companies in the scope of the online safety legislation occupy central roles in the public sphere today, providing key forums through which public debate occurs. It is vital that they ensure that the health of discussions is not undermined by those who spread hate and division.

At present, online speech that causes division and harm is often defended on the basis that to remove it would undermine free speech. In reality, allowing the amplification of such speech only erodes the quality of public debate, and causes harm to the groups such speech targets. This defence, in theory and in practice, minimises free speech overall. This regulation instead should aim to maximise freedom of speech online for more people, especially those currently marginalised and attacked based on their gender, gender identity, race, disability, or sexual orientation, or often, a combination of characteristics.

Those condemning the Bill on free speech grounds underestimate the potential for social inequalities to be reflected in public debate, and disregard the nature and extent of these inequalities in the ‘marketplace of ideas’. As such, the position of some ‘free speech’ advocates can be paradoxical. They claim to be committed to valuing free speech above all else, propagating an unequal debate that further undermines the free speech of those who are already harmed by social inequalities. Some of those currently arguing for the removal of legal but harmful content from this legislation are instead proposing to criminalise speech that is currently legal – a proposal potentially at odds with the aim of preserving free expression.

When content breaks a platform’s policies around hate speech it must be removed. However, a regime based on systemic change rather than the takedown of individual pieces of content is more likely to be free speech-preserving. Rather than focusing on whether individuals are increasingly being prosecuted for their speech – in our view a greater threat to freedom of expression than having one’s online content removed – the Online Safety Bill focuses on how systems can be adopted that minimise the harm of certain forms of speech. All systems deployed by platforms are active choices: there is no ‘neutral’ or ‘default’ position that guarantees complete and open speech for all. The Online Safety Bill offers the potential to shift the scales from amplifying harm to reducing its spread by applying systems and processes to reduce the promotion and targeting of hate and abuse. This will create a more level playing field for all people participating online.

Prefer to listen? Click the play button to hear the audio version. Harry Shukman Reform UK is staffed by oddballs and enigmas, but none odder…

HOPE not hate reveals the key figures behind Raise the Colours, exposing their criminal histories and their intimidation of local people. Unite the Kingdom attendees…

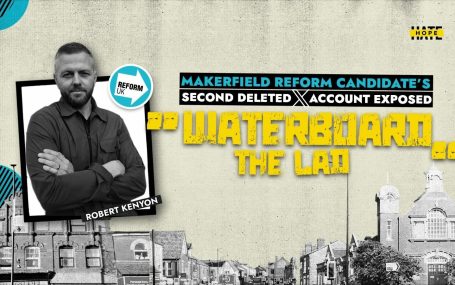

By Paul Kershaw and Sarah Hadley On a deleted social media account, Reform UK’s candidate in the upcoming Makerfield parliamentary by-election, Rob Kenyon, made creepy…